computer vision

Images are the fuel of computer vision. In this text, the sociologists and computer science researchers Miceli Milagros and Tianling Yang, discuss the performativity of ground truth data that are produced in image labeling processes.

TPG

2023-04

Reconstructing realistic images with high semantic fidelity is still a challenging problem. Let's try with a latent diffusion model.

TPG

2021-05

What Does the Algorithm See? Panellists, artist / theorist / curator Rosa Menkman and artist Joanna Zylinska join Dr Rachel O’Dwyer, NCAD.

TPG

2020-10

“Question to twitterverse: A lovely PhD student and I are looking for papers and other projects from the social sciences and humanities on computer vision, image recognition and on the production of …

TPG

2020-09

We propose Localized Narratives, a new form of multimodal image annotations connecting vision and language. We ask annotators to describe an image with their voice while simultaneously hovering their …

We propose Localized Narratives, an efficient way to collect image captions with dense visual grounding. We ask annotators to describe an image with their voice while simultaneously hovering their …

A speculative remix that confronts Epic Kitchens, a dataset of first-person cooking videos, with quotations from literature written during or about prior pandemics such as the bubonic plague and the global influenza pandemic of 1918-19.

2020-04

This article is an overview of the projects 'Epic Handwashing in a Time of Lost Narratives' and 'A Kitchen of One's Own' weaving a thread between the technical and the conceptual: the projects are linked historically by the writing and arguments put forth by Virginia Woolf, technologically by computational juxtapositions of text and image, as well …

TPG

2020-01

Early Modern Computer Vision - Leonardo Impett https://docs.google.com/document/d/1LKs82uKkSgQ-4wGUQ4Dwzxgnerx2e6zbHf4iGIHuJmI/edit#heading=h.60chgdizcy6h

Reconstructing 3D human shape and pose from a monocular image Reconstructing 3D human shape and pose from a monocular image is challenging despite the promising results achieved by the most recent …

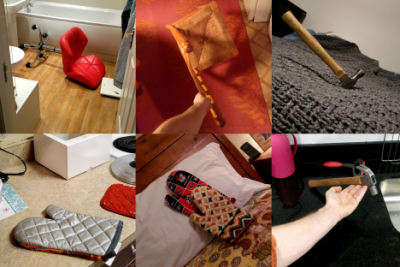

Objects are posed in varied positions and shot at odd angles to spur new AI techniques.

In Heather Dewey-Hagborg’s artwork ‘How do you see me?’, commissioned for the Data/Set/Match programme at The Photographers’ Gallery, the artist explores how machines see us. A question that has been carefully slipping through several areas of production and research during the past couple of decades. At the same time an essential need has also …

In September 2019 the ImageNet creator Fei-Fei Li gave a talk at The Photographers' Gallery talking through the events and key people that led to the creation of visual datasets.

TPG

2019-11

Computer vision relies on algorithms to make sense of the world. Unseen Portraits investigates what face recognition algorithms consider to be a human face. We…

In 2019 The Photographers' Gallery digital programme launched 'Data / Set / Match', a year-long programme that explores new ways to present, visualise and interrogate contemporary image datasets. This introductory essay presents some key concepts and questions that make the computer vision dataset an object of concern for artists, photographers, …

TPG

2019-09

Three New Cameras Camera Restricta - Philipp Schmitt, 2015 Camera Restricta is a speculative design of a new kind of camera. It locates itself via GPS and searches online for photos that have been …

TPG

2019-09

DeepPrivacy A fully automatic anonymization technique for images. This repository contains the source code for the paper “DeepPrivacy: A Generative Adversarial Network for Face …

The success of ImageNet highlighted that in the era of deep learning, data was at least as important as algorithms. Not only did the ImageNet dataset enable that very important 2012 demonstration of …

While humans pay attention to the shapes of pictured objects, deep learning computer vision algorithms routinely latch on to the objects’ textures instead Image: Robert Geirhos …

6,295 Likes, 608 Comments - Bill Posters (@bill_posters_uk) on Instagram: “‘Imagine this...’ (2019) Mark Zuckerberg reveals the truth about Facebook and who really owns the…”

TPG

2019-05

Let’s play Name That Dataset!!! https://people.csail.mit.edu/torralba/research/bias/

TPG

2019-04

VFRAME Adam Harvey A collection of open-source computer vision software tools designed specifically for human rights investigations that rely on large datasets of visual media. Specifically VFRAME is …

TPG

2018-06

Explore Airbnb listings through algorithmically generated collages.

TPG

2017-12

http://www.themtank.org/a-year-in-computer-vision

TPG

2017-11

CCamera is the first camera app that takes images that have already been uploaded to the internet and brings your photos to the next level — because they’re not yours.

TPG

2017-02

source: http://sevenonseven.rhizome.org

TPG

2016-12

https://arxiv.org/abs/1612.07828

2016-11

“The truth is written all over our faces” was a tagline for Lie to Me, a procedural drama on network television several years ago.

“The idea is about making faces communicate with each other,” he says. He designed the software to recognize facial expressions and their related emotions. While you watch, an algorithm uses the …

TPG

2016-11

Luda Zhao, Brian Do and Karen Wang

#deepdream is appealing because it gives us access to machine pareidolia, an area of great artistic interest before Google got involved – see Henry Cooke‘s experiments with faces-in-the-cloud and …

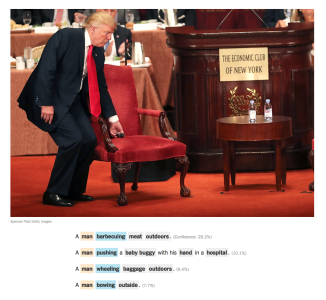

This video demonstrates both the impressive capabilities of neural captioning systems, as well as the humorous (and maybe unsettling) limitations of such systems when their training data lack the …

The mirror avoids faces. One can look at his/her face in the mirror only with a nonface. A work by the Shinseungback Kimyonghun artist group

TPG

2016-10

from Katrina Sluis, Future Semantic Web in How To Run Faster 1, published by @arcadiamissa, 2011

Computer Vision: On the Way to Seeing More source: New York Times / imSitu

TPG

2016-09

prostheticknowledge: UberNet Computer Vision research from Iasonas Kokkinos demonstrates method neural networks can assist in the field, encompassing various methods into one system: In this work we …

TPG

2016-09

A robot solving the Instant Insanity puzzle. The film was shot in 1971 in the Stanford AI Lab.

Eye-tracking technology helps us understand how people interact with their environment. This can improve policy and design, but can also be a tool for surveillance and control.

Example of an eyetracking “heatmap” that shows how much users are likely to look at different parts of a video. On the left the real eyetracking, on the right a computer model of human …

TPG

2016-08

New detection technologies will move us toward a more precise understanding of images.